3. Using Amazon S3

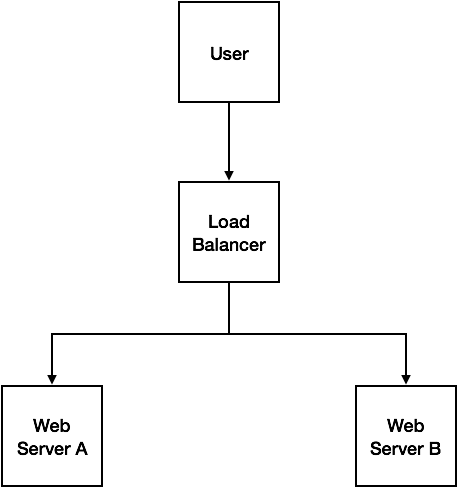

The examples in the previous chapter stored u files on the local filesystem. While this is fine in development and for small applications, it can become problematic in production. It's common practice to deploy applications not just to a single Web server, but to multiple Web servers, with a load balancer sitting in front directing traffic and spreading the load between the servers. In this type of environment, you generally want to avoid storing any state or data on the Web servers themselves - and this includes uploaded files.

The above image illustrates the potential problem with this scenario. Every request the user makes to the application is directed to the load balancer, which then decides which server to route the user's request. For the first request, where the user uploads a file, let's say the load balancer passes that request to Web Server A, which takes the file and stores it on the Web server's filesystem. For the second request, where the user wants to retrieve the uploaded file, the load balancer routes it to Web Server B. When the application looks for the file in the local filesystem, it won't find it - as it's stored on Web Server A.

There are several approaches to resolving this issue. One of the simplest is to use a cloud storage solution such as Amazon S3 (Simple Storage Service).

3.1. Configuring the S3 driver

To store our files in Amazon S3, you will first need to set up the S3 driver for your Laravel application. This requires an additional composer package that is not included with Laravel by default. In your terminal, change to your project directory and run the following command:

$ composer require league/flysystem-aws-s3-v3:~1.0

This will install the S3 driver, as well as the AWS SDK for PHP and other dependencies. Once it's installed, you need to add your S3 credentials to your app. If you open the config/filesystems.php file in your project, you'll notice some environment variables that you can set to configure the S3 driver. Open the .env file in your project's root directory, and append the following lines to it. Be sure to add in your own AWS credentials here.

FILESYSTEM_DRIVER=s3

AWS_KEY=YOUR_AWS_KEY_HERE

AWS_SECRET=YOUR_AWS_SECRET_HERE

AWS_REGION=YOUR_AWS_REGION_HERE

AWS_BUCKET=YOUR_AWS_BUCKET_HERE

I recommend that you create a new S3 bucket for the purpose of this book. Make sure that the bucket is created in the region you set in the AWS_REGION variable, or you'll run into errors. You'll also need to ensure that the AWS user associated with the AWS_KEY and AWS_SECRET variables has access to store and retrieve files in the given bucket.

3.2. Storing and retrieving files in S3

At this point, if you try to upload a file by navigating to the upload form in your browser, you'll notice that it seems to work, but it fails when trying to retrieve the file. If you log into the AWS console and view the contents of your S3 bucket, you'll notice that the file was successfully uploaded (and resides in a private directory). By simply configuring Laravel to use the S3 driver by default, your app is now storing uploaded files in an S3 bucket.

Let's fix our app so that the image is displayed correctly following the file upload. In your project's routes/web.php file, changing the file route closure as follows:

Route::get('file', function() {

$path = request()->query('path');

$fs = Storage::getDriver();

$stream = $fs->readStream($path);

$headers = [

'Content-Type' => $fs->getMimetype($path),

'Content-Length' => $fs->getSize($path),

];

return response()->stream(function() use ($stream) {

fpassthru($stream);

}, 200, $headers);

});

At the end of the previous chapter, access to uploaded files was restricted so it had to run through a Laravel route rather than being served directly from the Web server. The code above will provide us with the same functionality. By default, files stored in S3 are not public, so when our form is submitted and the file is uploaded to S3, it is not accessible via a public URL. Here, we are reading the file from S3 as a stream, and then passing that stream through as a response

If you try uploading a file using the upload form now, you should see the file in all its glory.

3.3. Serving private files directly from S3

If you're like me, the code in the last section may have made you uneasy. After all, we're essentially downloading a file to our Web server, just to serve it back to the user. Wouldn't it be nice if instead we could let the user access the file directly from S3 itself? The simplest approach to this is to set the uploaded file as public, and then display the image using its S3 URL. The problem with this approach is that anyone with the S3 URL for the image can access the file. In many cases, this is absolutely fine and we will cover how to do this in the next section. But for now, let's say that we still want our files to be private, but we want to serve them directly to the user from S3. Thankfully, this is made easy using temporary signed URLs.

In this example, we're not going to need a file route closure at all, as we are going to be generating an S3 URL that can be used to access the file for a limited timeframe. So feel free to remove the file closure from your routes/web.php file if you wish.

In the show route closure, we're currently loading the image by passing through the path to the file route as follows:

$url = "/file?path=$path";

Change this line to the following:

$url = Storage::temporaryUrl($path, '+10 minutes');

Return to your upload form and submit it. You'll notice that the image still displays. If you open your browser's developer tools and inspect the Network request, you'll notice that the image is loaded from a URL that looks something like

https://yourbucketname.s3-yourregion.amazonaws.com/private/1501754187-5982f34b3a67a.png?X-Amz-Content-

Sha256=UNSIGNED-PAYLOAD&X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-

Credential=YOURAWSKEY%2F20170803%2Feu-west-1%2Fs3%2Faws4_request&X-Amz-Date=20170803T101407Z

&X-Amz-SignedHeaders=host&X-Amz-Expires=600

&X-Amz-Signature=c5ebaebddd96a8e19e80861f263126e76d64ec39bfd632aaa5521e378cd0c996

This URL will enable access to the file for the duration specified in the temporaryUrl method call, in this case 10 minutes. This is a very convenient way of providing users access to private files in S3 without making the files permanently publicly available. In theory, a user could share this link with others and they could use it to access the file - but if they try to do so once the specified timeframe has elapsed it will no longer work.

NOTE

The

temporaryUrlmethod was introduced in Laravel 5.4.31.

3.4. Serving public files directly from S3

If you want to store uploaded files in S3 and have them publicly accessible via a fixed public URL, you'll need to make a couple of minor changes to your project. The first change is in how the file is stored in S3 when it is uploaded. By default, files uploaded to S3 will be private. You can change this setting at a bucket level if you like - making all or a subset of files public automatically. To do this, you need to log in to the AWS console, navigate to your S3 bucket and create a bucket policy.

Alternatively, you can set the visibility of the file when you upload it. Thankfully, Laravel makes it really easy to do this. In your POST request closure in the routes/web.php file, find the following line:

$path = $file->storeAs('private', $filename);

To store the file publicly, simply change this to the following:

$path = $file->storePubliclyAs('private', $filename);

This will change how we the file is stored in S3, but our show route is still using the temporaryUrl method to get a temporary, expiring link to the file. In the show route closure, find the following line:

$url = Storage::temporaryUrl($path, '+10 minutes');

Replace this line with:

$url = Storage::url($path);

Now, upload a file using the form once again. This time, it will be uploaded, made public so anyone can view it with a URL, and the show page will fetch its public URL using the url method on the Storage facade. Once again, you should see the image. This returns a URL similar to the following:

https://yourbucketname.s3-yourregion.amazonaws.com/private/1501767361-598326c1bcf6a.jpeg

Because this URL doesn't expire, the file will be cached by your browser and won't need to be re-downloaded on subsequent visits for as long as it remains cached.

3.5. Summary

Once again, Laravel makes it easy for us to handle uploaded files, and moving from a local filesystem storage model to a cloud service like Amazon S3 is really straightforward. This chapter covered uploading files to S3 and serving them firstly by streaming them through the Web server, but then by generating URLs so they could be downloaded directly from S3 itself. The next chapter covers a similar concept - enabling files to be uploaded directly to S3 from the client's machine, bypassing the Web server altogether.